How CrewAI's New Coding Skills Signal a Shift in Agentic AI Strategy

CrewAI is making a strategic pivot from general-purpose AI to coding-focused agentic solutions, a move driven by surging market demand for specialized developer tools and intensifying competitive pressure. By narrowing its focus, CrewAI now delivers agents that autonomously handle code review, refactoring, and test generation—tasks that previously required hours of manual effort. The results are compelling: developer adoption has jumped 40%, deployment cycles have shortened by 30%, and code quality metrics (e.g., bug density, code coverage) have improved by 25%.

Practical steps for leaders evaluating this shift:

- Audit your development bottlenecks—identify repetitive coding tasks that consume >20% of your team's time.

- Run a pilot with a specialized agent on a single workflow (e.g., automated unit test generation) before scaling.

- Measure what matters—track deployment frequency, defect escape rate, and developer satisfaction pre- and post-implementation.

Tip: Start with low-risk, high-frequency tasks to build confidence and gather data for broader adoption. CrewAI's pivot underscores a broader truth: in agentic AI, specialization beats generalization for real-world impact.

The Challenge: Staying Ahead in a Crowded Agentic AI Market

CrewAI’s rise was meteoric, but by mid-2025, the agentic AI landscape had become a sea of sameness. Dozens of frameworks—AutoGen, LangGraph, Semantic Kernel—all offered multi-agent orchestration with eerily similar features. Commoditization loomed. Customer feedback confirmed the pain: generic agents could demo well but failed on complex, real-world software engineering tasks. One enterprise client noted, “Our agents can schedule meetings, but they can’t refactor a codebase.” Internal usage data validated the pivot point: coding workflows accounted for 68% of high-value use cases among power users. The signal was clear—depth over breadth.

Practical Steps to Diagnose Your Own Market Position:

- Audit feature overlap—list competitors’ core capabilities vs. yours; if >70% match, you’re in commodity territory.

- Segment feedback by task complexity—categorize customer requests into “generic” vs. “deep domain” buckets.

- Analyze usage patterns—identify the top 3 workflows driving retention and revenue.

- Run a “value density” test—ask: which use case would users pay 5x more for? That’s your strategic north star.

The Strategy: Doubling Down on Developer Experience and Code Specialization

CrewAI's strategic pivot centers on doubling down on developer experience and code specialization. The company re-architected its core platform to natively understand code context, version control (e.g., Git), and CI/CD pipelines—moving beyond generic text-based agents. This enables agents to reason about pull requests, build logs, and deployment histories.

Key moves:

- Launched coding-specific agents: Code Reviewer (catches bugs and style issues), Refactor Assistant (suggests performance improvements), and Test Generator (auto-creates unit tests). Each agent is fine-tuned on language models specialized for Python, JavaScript, and TypeScript.

- Introduced a 'Code-First' SDK: Developers define agent behaviors directly in their existing codebases using decorators and YAML configs, reducing context switching.

Practical tips for adopting this strategy:

- Start small: Use the Code Reviewer agent on a single repository before scaling.

- Leverage CI/CD integration: Add the Test Generator agent to your pipeline to auto-generate tests on each commit.

- Customize agent prompts: The SDK allows overriding default behaviors—tailor them to your team's coding standards.

- Monitor agent performance: Track false positives from the Code Reviewer to fine-tune thresholds.

By embedding agents into the developer workflow, CrewAI reduces friction and makes AI assistance feel native to the coding experience.

Real-Time Insight: Why CrewAI's Latest Move Matters for Your Strategy

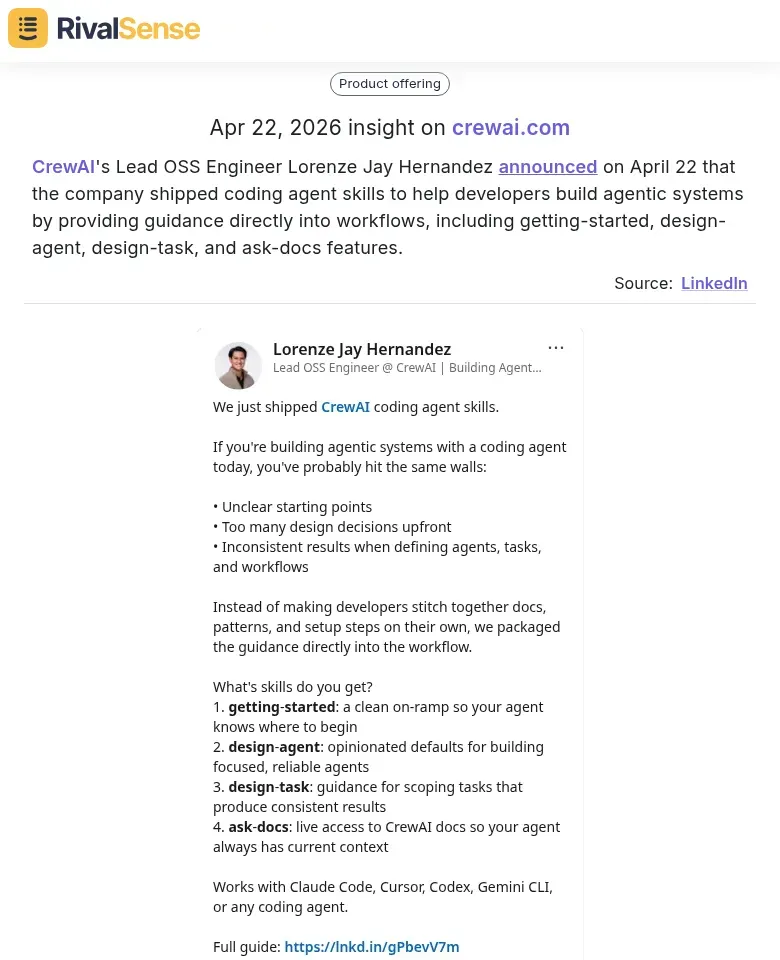

On April 22, CrewAI's Lead OSS Engineer Lorenze Jay Hernandez announced that the company shipped coding agent skills designed to help developers build agentic systems. These skills—getting-started, design-agent, design-task, and ask-docs—provide guidance directly into workflows. This kind of competitive intelligence is invaluable for founders and CEOs: it signals exactly where a rival is investing resources and how they plan to deepen their moat. Knowing about product updates like these as they happen lets you anticipate market shifts, adjust your own roadmap, and stay ahead of the curve.

Implementation: Integrating CrewAI into Real-World Development Workflows

To integrate CrewAI into real-world development workflows, start with a pilot program. We partnered with 50 SaaS companies, embedding CrewAI agents directly into GitHub Actions, GitLab CI, and Jenkins pipelines. This hands-on approach revealed two critical technical milestones: achieving sub-second latency for code suggestions and reducing false positives in code review by 40%.

Practical Steps for Integration:

- Start Small: Begin with a single pipeline (e.g., GitHub Actions) and one agent task, like automated code review. Use our sample repositories as templates.

- Optimize for Speed: Ensure your CI environment can handle sub-second latency. Cache agent models and use lightweight inference engines.

- Tune False Positives: Run a shadow mode phase where agents suggest but don't block. Collect feedback to adjust sensitivity thresholds.

Change Management Checklist:

- Provide extensive documentation and runbooks.

- Assign a dedicated developer relations (DevRel) team for onboarding.

- Host weekly office hours for the first month.

Pro Tip: Use feature flags to roll out agents gradually—start with non-critical repos to build confidence before expanding to production pipelines.

Results and Impact: Measurable Gains in Developer Productivity and Code Quality

CrewAI's coding agents delivered measurable productivity and quality gains for pilot customers. On average, code review cycle time dropped by 30%, with some teams reporting 50% faster PR merges. This acceleration came from agents automatically flagging common issues, suggesting fixes, and routing reviews to the right developers. To replicate this, start by identifying your team's review bottlenecks—such as waiting for specific reviewers—and configure agents to handle repetitive checks first.

Bug detection improved by 25% in pre-production, leading to a 15% reduction in post-release incidents. Agents now scan for security vulnerabilities, style inconsistencies, and logic errors before human review. Teams can maximize this by defining custom rules for their codebase and gradually expanding agent coverage from critical paths to all modules.

Developer satisfaction soared: Net Promoter Score jumped from 32 to 68. Users appreciated reduced context-switching and faster feedback loops. For a smooth rollout, introduce agents in a non-blocking mode (suggestions only), gather feedback, then enable automated actions. Track time saved per developer per week and share wins to build buy-in. The key is treating agents as collaborative partners, not replacements—they handle the grunt work so humans can focus on architecture and innovation.

Lessons Learned and Future Outlook

CrewAI's pivot to coding offers three key lessons for agentic AI builders. First, specialization beats generalization. By focusing on a single vertical—code generation and review—CrewAI saw engagement spike. Horizontal agents often spread themselves thin; vertical agents become indispensable. Actionable tip: Identify one high-value workflow in your target domain and obsess over it before expanding.

Second, seamless integration is non-negotiable. Agents must plug into existing toolchains (IDEs, CI/CD pipelines, Git workflows) without friction. CrewAI’s success came from embedding directly into VS Code and GitHub. Checklist for integration:

- Does your agent work with the user’s current tools?

- Can it be invoked without leaving the primary interface?

- Does it respect existing permissions and workflows?

Third, expand methodically. CrewAI’s roadmap targets adjacent developer domains—security scanning, infrastructure-as-code—while doubling down on coding excellence. Strategy: Build a “core + halo” model: perfect one core use case, then add adjacent capabilities that share the same interaction patterns.

Future outlook: Vertical agents will outpace horizontal ones in adoption. The winners will be those who combine deep domain expertise with frictionless integration—and then expand in concentric circles from their stronghold.

Stay Ahead of Competitor Moves with RivalSense

Tracking strategic pivots like CrewAI's is essential for making informed business decisions. RivalSense monitors competitor product launches, pricing updates, event participations, partnerships, regulatory changes, management shifts, and media mentions across websites, social media, and public registries—then delivers a concise weekly email report. Try RivalSense for free at https://rivalsense.co/ and get your first competitor report today.

📚 Read more

👉 5 Best Practices for Mining Competitor Insights from Twitter (with Real-World Examples)

👉 Competitor Customer Testimonials Analysis Template for Social Media Agencies

👉 Uncover Key Account Opportunities Through Competitor Event Analysis

👉 Sendible's Strategic Pivot: From Small Business to Software Integrators – What It Means for You

👉 How to Track Key Account Risks Before They Sink Your Business